Demystifying A/B Testing: 5 Powerful Ways to Improve Your Conversion Rates

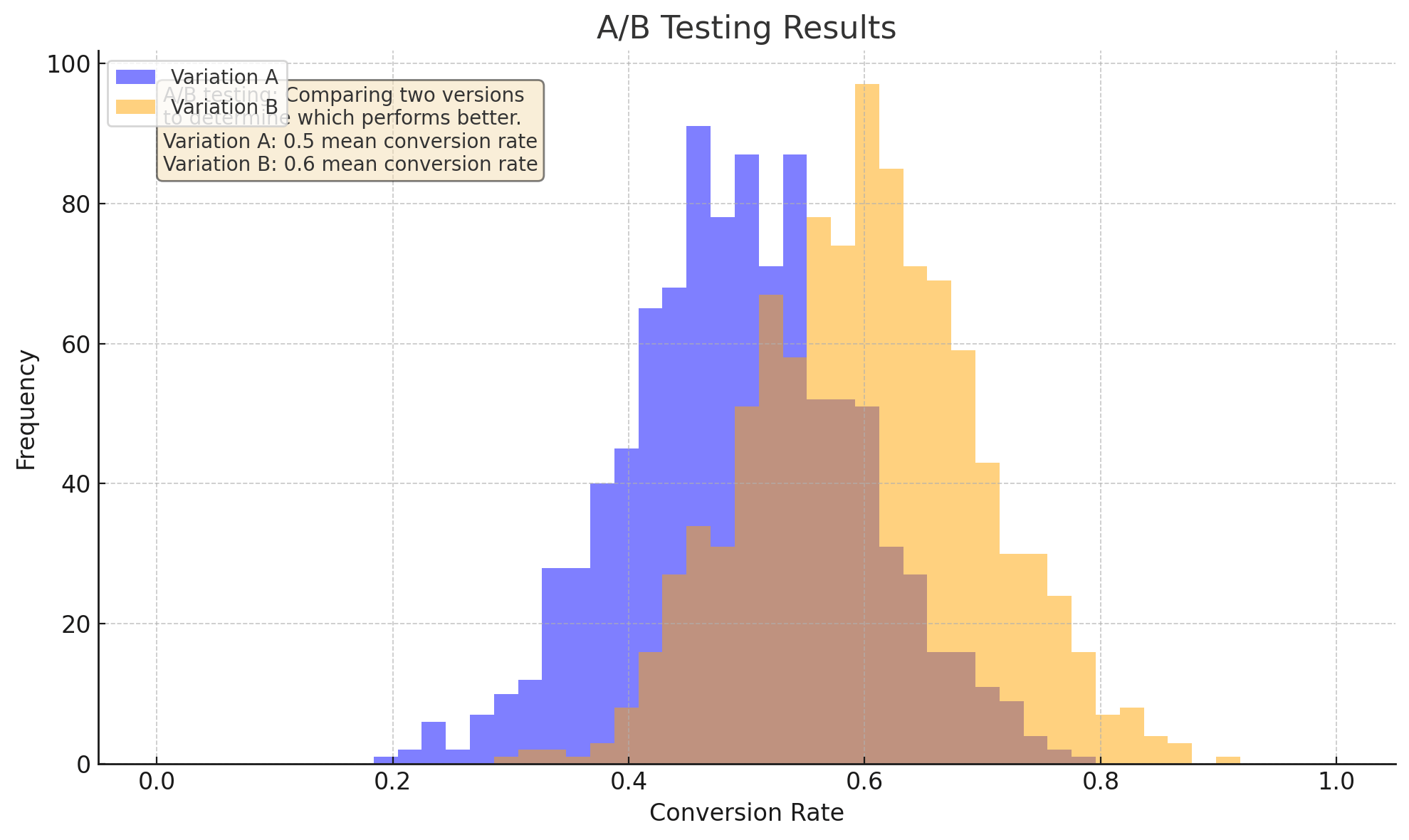

A/B testing, or split testing, involves creating two versions of a webpage, email campaign, or digital asset. One version serves as the "control" (original), while the other is the "variant" (test) featuring a specific variation designed to test a hypothesis.

What is A/B Testing?

A/B testing, or split testing, involves creating two versions of a webpage, email campaign, or digital asset. One version serves as the "control" (original), while the other is the "variant" (test) featuring a specific variation designed to test a hypothesis.

The goal is to identify which version performs better regarding user engagement, conversion rates, or overall performance, enabling data-driven optimization decisions.

Why A/B Testing Matters

A/B testing is crucial for improving conversion rates because it allows you to:

- Validate assumptions - Test whether specific design elements or copy changes will improve conversions

- Identify optimal variations - Determine which version performs best, eliminating guesswork

- Reduce uncertainty - Minimize risk of changes that negatively impact conversion rates

How to Conduct A/B Testing

5 Steps

1. Defining Your Hypothesis

Before starting, establish a clear hypothesis. What specific change do you want to test? For example: "Will a red button perform better than blue?" or "Will a longer form increase conversions?"

- Identify the variable you want to test (button color, form length)

- Determine your test goal (increase conversions, improve engagement)

- Define metrics for measuring success (conversion rate, click-through rate)

2. Creating Your Control and Variant

- The control version should be your original, untested digital asset

- The variant version should feature the specific change being tested

- Ensure both versions are identical except for the variable being tested

3. Splitting Traffic

To ensure accurate results, split traffic evenly between versions:

- Use randomization tools (Google Optimize, Unbounce) to split traffic

- Ensure both groups have equal participants to account for biases

4. Collect Data and Analyze Results

- Use analytics tools (Google Analytics, Mixpanel) to track key metrics

- Compare performance of both versions using statistical methods

- Identify statistically significant differences between versions

5. Implement Changes after Drawing a Conclusion

- Determine which version performed better based on data

- Document findings and create a summary report

- Use insights to inform future optimization efforts

A/B Testing Examples

Headline Test

- Original: "Get Started with Our Service Today!"

- Variant: "Unlock the Power of [Service Name] and Start Seeing Results!"

- Goal: Increase conversions by 10%

Test which headline resonates better with your target audience to encourage action.

Button Color Test

- Original button: Blue

- Variant button: Red

- Goal: Increase click-through rate by 5%

Test different button colors to see which stands out more and grabs attention.

Image Test

- Original: Generic stock photo

- Variant: Real customer testimonial with testimonial quote

- Goal: Increase engagement by 15%

Test images that resonate better with audiences and build trust through real-world examples.

Form Length Test

- Original: 5 questions

- Variant: 3 questions

- Goal: Increase conversions by 12%

Test different form lengths to identify the sweet spot between gathering adequate information and minimizing friction.

Email Subject Line Test

- Original: "Your Account Information"

- Variant: "Important Update to Your Account – Check Now!"

- Goal: Increase open rates by 10%

Test subject lines that grab attention and encourage people to open emails.

Best Practices for A/B Testing

- Start small and gradually increase complexity

- Keep testing fair—ensure control and variant versions are identical except for the tested variable

- Test multiple variations simultaneously to accelerate learning

- Monitor and analyze results using data visualization tools

Common A/B Testing Mistakes

- Insufficient sample size - Too few participants can lead to inaccurate results

- Inadequate control group - Failing to maintain an identical control version skews results

- Over-testing - Running too many simultaneous tests dilutes findings and wastes resources

Conclusion

A/B testing is essential for unlocking optimization potential and improving conversion rates. Understanding what it is, how it works, and best practices for conducting successful tests equips you to make data-driven decisions that drive results.

Remember: A/B testing is an ongoing process of experimentation, iteration, and optimization. By embracing this iterative approach, you'll continually refine your strategy and achieve greater success in digital marketing.

Share this article